In 2026, the strategic value of a company’s AI toolbox is best measured not only by the underlying AI models adopted, but also by their operational maturity: the establishment of standardized processes, well-defined intervention points, demonstrated productivity improvements, and governance frameworks that enable secure and scalable deployment. When organizations can clearly delineate assistive AI functions, autonomous agent capabilities, and areas requiring human validation, they transition AI usage from tactical experimentation to foundational business transformation.

Level of Engagement

- Frequent – used daily/weekly by multiple team members

- Medium – important but more situational or team-specific

- Light – early-stage, experiments, or niche use

1. General-Purpose AI Assistants (Everyone’s Helpers)

General-purpose assistants are best positioned as a “cognitive layer” across the company: they reduce time-to-first-draft, accelerate understanding, and create a shared baseline for thinking. The leadership opportunity here is standardizing prompt patterns, review expectations, and safe-use boundaries so quality stays consistent even as usage scales. Teams that win don’t just use AI assistants more, they use them more predictably.

AI “brains” you can ask about almost anything – ideas, explanations, research, and drafts.

ChatGPT – Frequent

- Used for content drafts, UX copy, case studies, social posts, coding help, documentation, and “explain this” questions.

- “Atlas browser” is specifically used to read/summarize web pages and research papers

Gemini – Medium

- Deep research, keyword exploration, learning new topics, plus command-line usage for scripts and batch development tasks.

Claude (Chat / CoWork) – Medium

- Used for careful reasoning, coding, debugging, and research;

- Valued for accuracy and security.

2. Coding & Developer Productivity

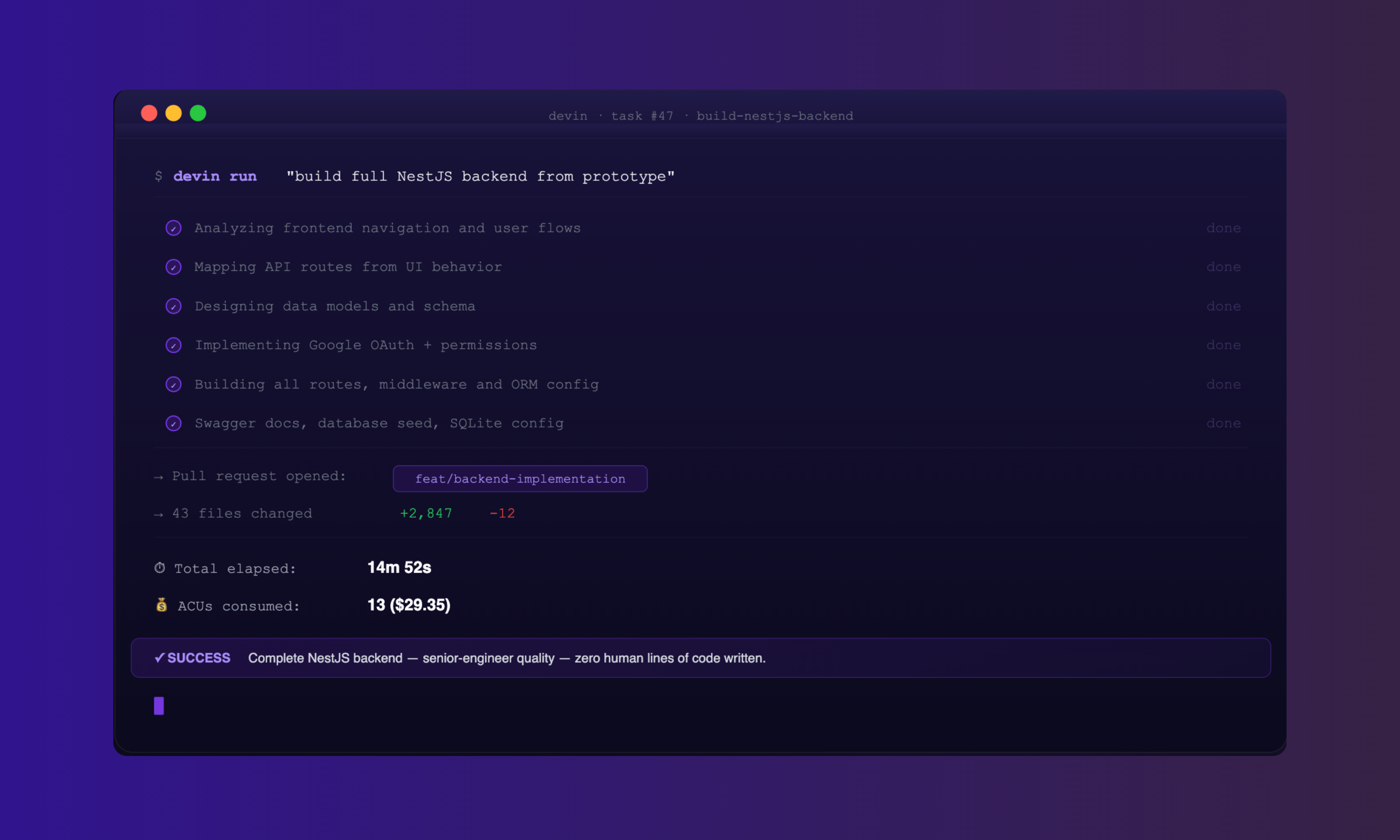

This is where AI value becomes easiest to prove. The strongest signal in mature teams is a clear “agent → human review → test → merge” loop with defined checkpoints. The real differentiator isn’t autocomplete, it’s accelerating large upgrades, multi-file refactors, and repository-wide change management without sacrificing correctness. The leadership move is to formalize: what tasks AI can do end-to-end, what requires peer-review, and what must be validated by human-led automated tests.

Tools that help developers write, understand, test, and upgrade code faster.

CodeX (using GPT-5 or higher) – Frequent

- Internal AI coding environment that acts as a primary development agent for multiple engineers across different teams

- Command-line and API-based AI that can take a first pass at big upgrades (e.g., dependency/framework updates), followed by developers’ review.

- Clients report 5–6x faster upgrades and up to ~80% completion on the first pass.

Cursor – Frequent

- Full AI-powered code editor for multi-file edits, repo-wide changes, and debugging.

- Used across “almost all development projects” for frontend;

- Success Matrix shows 4–16 hours/week saved per developer.

GitHub Copilot / Microsoft Copilot – Frequent

- Inline “pair programmer” in VS Code/JetBrains and Microsoft tools;

- Great for everyday coding speed improvement.

Claude Code – Medium

- Agentic coding tool (often terminal-based, with IDE integration option) that can scan whole repos, plan upgrades, and apply edits.

Playwright (with LLM support) – Light

- Test framework that, when paired with LLMs, can capture and generate automated tests to boost coverage after upgrades.

Harness AIDA – Light

- AI inside deployment pipelines for pipeline management, deployment verification, and governance.

3. Automation & Workflow Orchestration

Orchestration is how AI becomes “real work” instead of “nice answers.” When teams connect AI to systems of record and define triggers, approvals, and rollback paths, they create compounding leverage. The leadership lens here is governance: log what ran, what it changed, who approved it, and how exceptions are handled. That’s how you scale automation without creating hidden operational risk.

Tools that move data and tasks around so people don’t have to.

n8n – Frequent

- Visual automation tool to connect services and orchestrate workflows;

- Used daily as “glue” between systems.

Vercept (Vy app) – Light–Medium

- Lets AI control the computer UI (clicks, typing, navigation) for automated UI tasks and RPA-like workflows.

- Used occasionally where no API exists.

4. Design, UI, and Prototyping

In product teams, speed-to-clarity matters more than speed-to-polish. These tools compress the distance between an idea and a tangible UI artifact, which improves alignment and reduces rework. The thought leadership angle: treat AI-generated UI as a conversation starter, then apply human taste, accessibility discipline, and real user context to turn prototypes into credible product decisions.

Tools that turn written ideas into screens and layouts quickly.

v0.app (by Vercel) – Medium

- Generates React/Tailwind components and pages from text prompts;

- Great for quick mockups and prototypes.

Lovable – Light

- Called out separately as a design-focused tool of interest for app/UI building.

- While not yet broadly adopted company-wide, but Lovable is gaining momentum.

Other design tools of interest (Relume, Midjourney, etc.) – Light

- Mentioned as tools team members would like to explore for richer visuals and design.

5. Content, Communication & Writing

For content, AI maturity looks like “brand accuracy at speed.” The win is not more output—it’s consistent voice, faster iteration, and tighter review cycles. The leadership posture: codify what “on-brand” means (examples, do/don’t lists, tone anchors), and use AI to accelerate drafts while keeping final editorial judgment in human hands.

Tools that help us write clearly, quickly, and on-brand.

Grammarly AI – Medium

- Used for clearer emails and proposals, tone adjustment, and polishing language.

- Especially helpful for non-native English speakers;

- Survey shows approx. 1–2 hours/week saved.

ChatGPT – Frequent

- Daily assistant for social post concepts, UX copywriting, and case study drafts.

- Also used broadly for content ideation and review.

What this toolbox communicates—implicitly, and powerfully— is that Devblock is moving beyond “AI usage” into “AI operations.” We’ve already defined engagement levels, surfaced measurable productivity impacts, and separated experimentation from core usage. The next step in the strategic movement is to add a governance layer: how you evaluate outputs, how you handle security and client data, and what “done” means for AI-assisted work.

That’s the difference between a modern toolkit and a scalable, defensible delivery system.